What the Clawdbot Craze Teaches B2B AI Founders About 'The Privacy Premium'

How a viral open-source agent proved that data sovereignty is the new competitive moat in enterprise AI

The enterprise AI landscape is undergoing a fundamental transformation. For years, the default architecture was simple: send your data to the cloud, get intelligence back. But a growing wave of founders, CIOs, and developers are rejecting that trade-off. They want intelligence without surrendering control. And nothing illustrates this shift more clearly than the Clawdbot phenomenon.

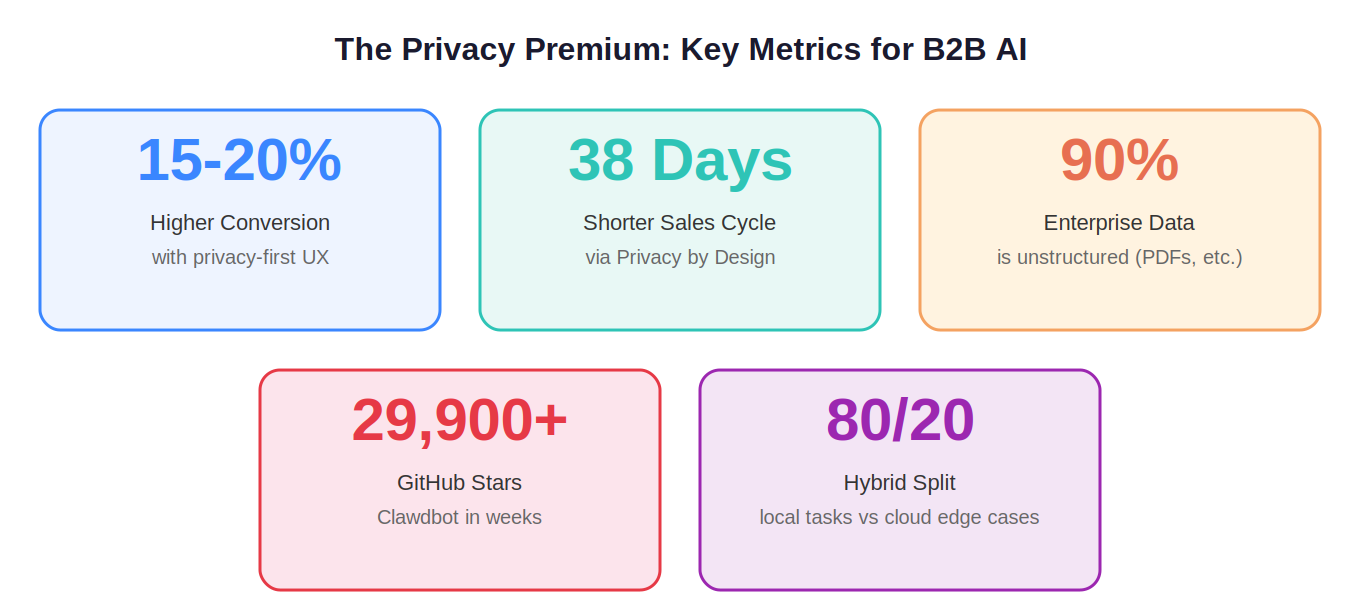

Clawdbot, a self-hosted AI assistant built by veteran developer Peter Steinberger, exploded onto the scene with over 29,900 GitHub stars in just weeks. Later rebranded to OpenClaw after trademark disputes, the project wasn't just another chatbot. It was an agentic system that ran 24/7 on local hardware, typically an Apple Silicon Mac Mini, with persistent memory, full file-system access, and proactive capabilities that cloud chatbots simply cannot offer. The demand was so fierce it contributed to hardware shortages for the Mac Mini.

For B2B AI founders, Clawdbot's rise carries a message that's impossible to ignore: data sovereignty is no longer a niche concern it's a primary go-to-market advantage. Welcome to the era of the "Privacy Premium."

From Reactive Chatbots to Proactive Agents

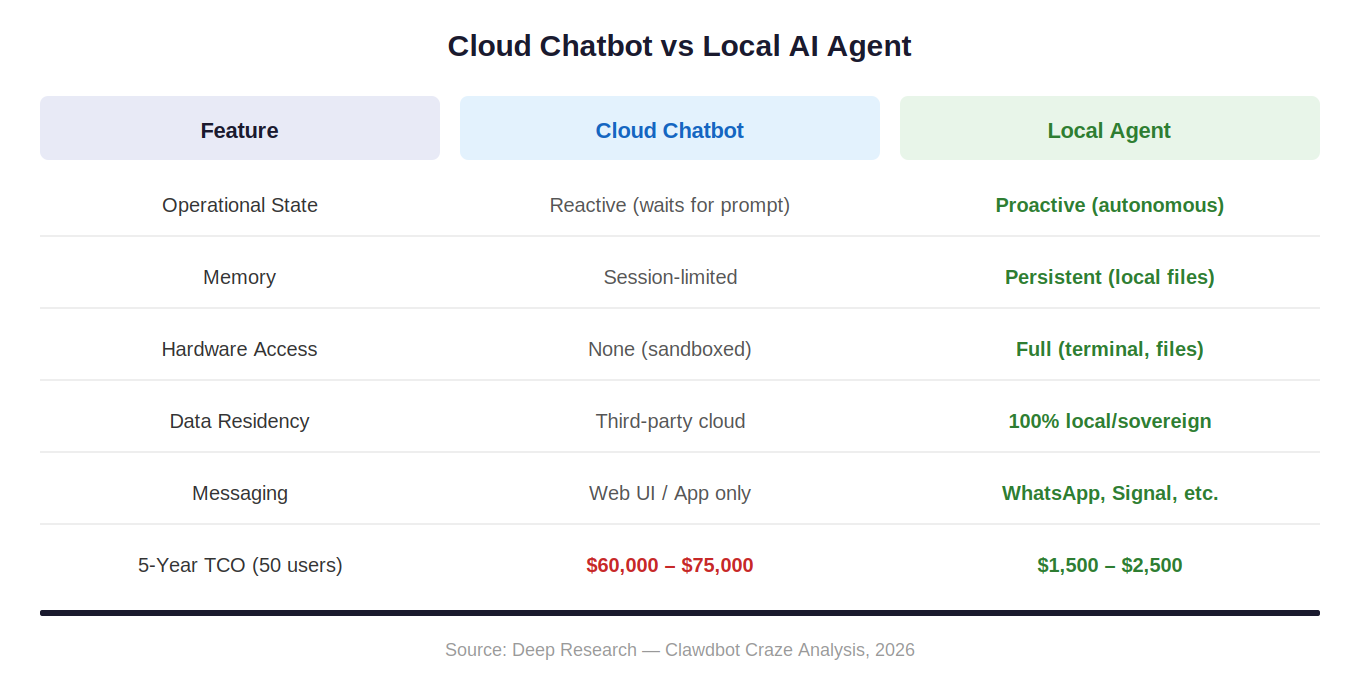

To understand why Clawdbot resonated so deeply, you need to understand what it replaced. Traditional cloud chatbots like ChatGPT or the standard Claude interface are fundamentally reactive; they sit idle until a user types a prompt. They operate within the sandboxed confines of a browser tab, with session-limited memory and zero access to your local system.

Clawdbot inverted every one of those constraints. Its architecture introduced "proactive heartbeats", scheduled autonomous check-ins that could send morning briefings via WhatsApp, monitor flight statuses, or flag anomalies in real-time data. It stored memory persistently in local Markdown files. It could execute shell commands, manage calendars, browse the web, and even conduct autonomous research, all without any data ever leaving your machine.

This wasn't just a technical upgrade. It represented a philosophical shift from AI-as-a-service to AI-as-infrastructure, a tool you own and control, not one you rent from a third party.

Defining the Privacy Premium

The "Privacy Premium" is the tangible economic value that enterprise customers assign to tools that guarantee data sovereignty. It's the premium they're willing to pay, or more precisely, the friction they're willing to skip, when a product proves that sensitive data never leaves their environment.

The numbers back this up. Research shows that transparent, user-centric privacy practices deliver 15–20% higher conversion rates. Startups that adopt a "Privacy by Design" framework can shorten enterprise sales cycles by up to 38 days, because they bypass the exhaustive security audits that typically stall cloud-based SaaS procurement. Meanwhile, 90% of enterprise data remains unstructured PDFs, images, videos representing a massive opportunity for privacy-first AI to unlock value where cloud tools often can't.

The market has effectively bifurcated into two camps: "Ad-Tech AI," which monetizes through data harvesting and user engagement, and "Privacy Premium AI," where data protection itself is the value proposition. For B2B founders, the second camp is where the margin lives.

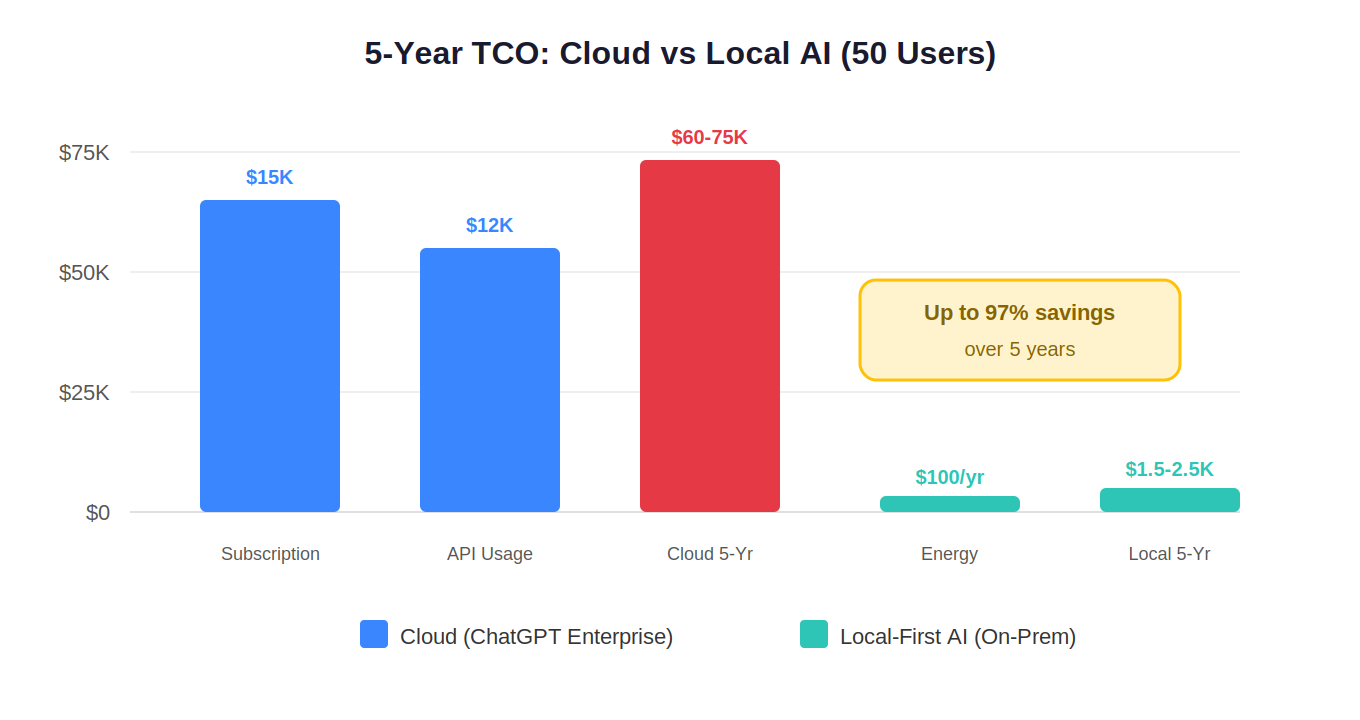

The Surprising Economics of Local AI

One of the most counterintuitive lessons from the Clawdbot craze is that local AI can be dramatically cheaper than cloud subscriptions at scale. A 50-user cloud-based AI deployment, factoring in per-seat licensing, API token usage, and storage, runs between $60,000 and $75,000 over five years. The equivalent local-first setup, after the initial hardware purchase, costs just $1,500 to $2,500 over the same period. That's a potential 97% reduction in total cost of ownership.

The economics flip from an OPEX-heavy model (recurring subscriptions that escalate with usage) to a CAPEX-focused one (upfront hardware with negligible ongoing costs, roughly $20–50 per machine annually in electricity). For CFOs navigating unpredictable per-token pricing from major API providers, this predictability is enormously attractive.

Beyond cost, there's the sovereignty argument. When an enterprise depends on a single cloud provider, they inherit that provider's roadmap changes, pricing shifts, and outages. Worse, there's the persistent anxiety around "training data risk", the possibility that proprietary data could inadvertently train future public models. Several major technology companies barred employees from using cloud agents on work machines in late 2025 for exactly this reason.

Small Models, Big Impact

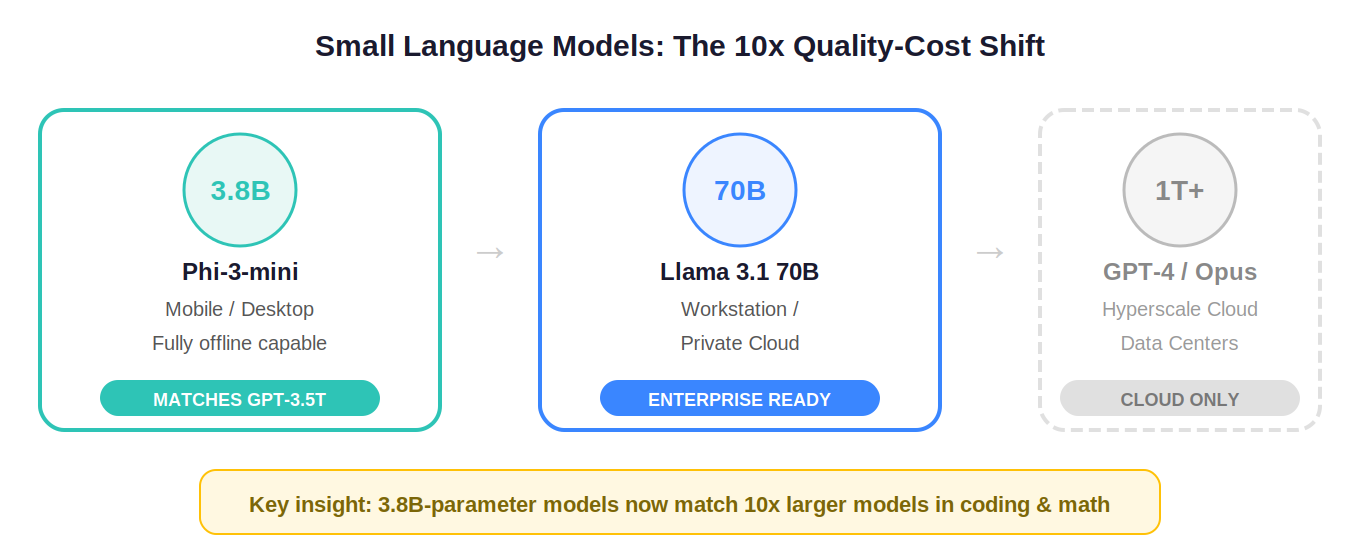

The conventional objection to local AI has always been performance: local hardware can't match hyperscale data centers. But the Small Language Model movement is dismantling that assumption. Microsoft's Phi-3-mini, with just 3.8 billion parameters, has demonstrated the ability to match models ten times its size, like GPT-3.5 Turbo, on coding and math benchmarks. This 10x quality-cost shift means sophisticated reasoning can now happen directly on consumer hardware, eliminating network latency entirely.

For B2B founders building privacy-first products, this collapses the technical barrier to entry. You can now offer zero-latency, fully private reasoning for use cases like field engineering assistants, healthcare diagnostic tools, or financial compliance agents, scenarios where internet connectivity may be unreliable and data privacy is non-negotiable.

Governance as a Growth Engine

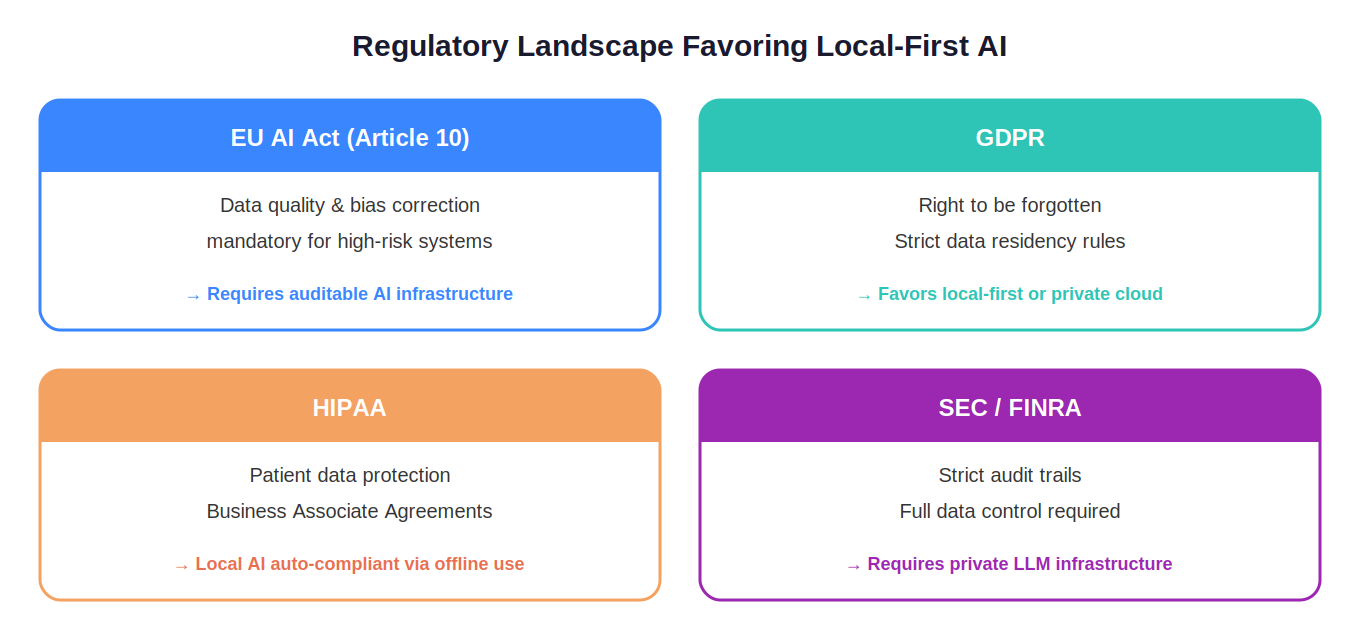

The regulatory landscape has shifted from treating AI governance as a compliance checkbox to recognizing it as a competitive differentiator. The EU AI Act, GDPR, HIPAA, and SEC/FINRA requirements all increasingly favor architectures where data stays within organizational boundaries.

Founders who design for auditability from day one, implementing frameworks like ISO/IEC 42001 or the NIST AI RMF, are finding that governance accelerates their sales pipeline rather than slowing it down. In regulated sectors like finance, healthcare, and defense, being able to prove the origin, quality, and safety of your AI's data isn't just a nice-to-have. It's the reason you win the deal.

The Founder's Playbook

The path forward for B2B AI founders is increasingly clear. First, embrace the hybrid model: most power users already run 80% of tasks locally and reserve cloud for the remaining 20% of edge cases requiring frontier-scale reasoning. Build products that support this workflow natively. Second, standardize on open protocols like Model Context Protocol (MCP) so your agents are interoperable by default. Third, monetize through trust, offer predictable pricing anchored to the value of data sovereignty, not per-token metering that creates CFO anxiety.

Companies like Block (with its open-source Goose agent framework) and Virgin Atlantic (with its AI travel concierge) are already proving that the agentic, privacy-first model works at enterprise scale. This isn't theoretical. It's the new standard.

The Bottom Line: The Clawdbot craze wasn't just a viral moment it was a market signal. Enterprise buyers are telling founders, loudly and clearly, that they want AI that's intelligent, autonomous, and sovereign. The Privacy Premium isn't a niche. It's the foundation of the next multi-billion-dollar enterprise software category. The founders who understand that your data should live at your fingertips, not behind a loading bar, will be the ones leading the agentic revolution.

Published by Thenga Labs